I’d like to preface this by stating that Amazon is obviously a huge company, and my opinions are just that, one person’s opinions. There will probably be some people that share my frustrations while others have had a completely different experience.

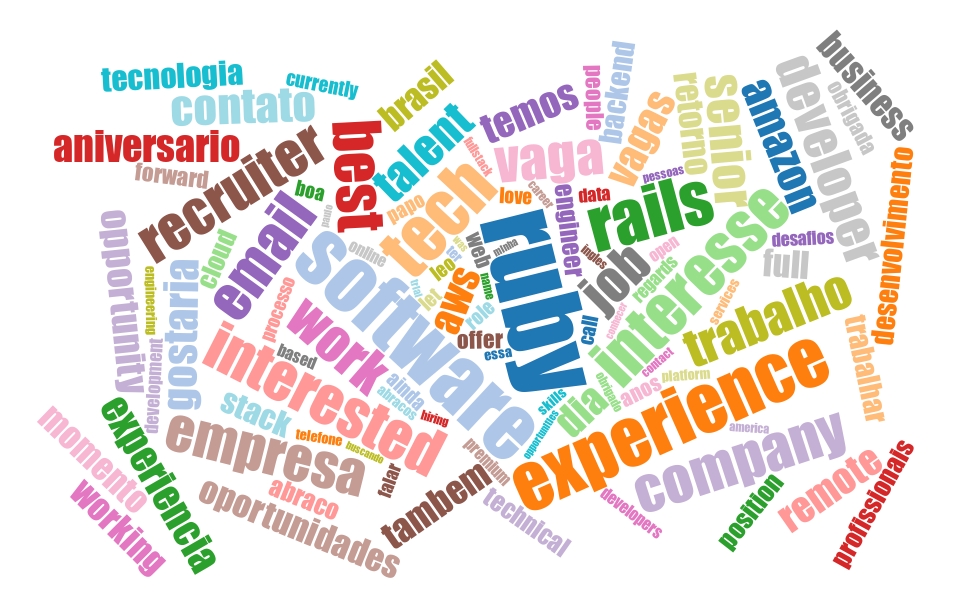

I interviewed at AWS in early 2020, pre-pandemic. The interview process is grueling and I spent considerable effort preparing for the 5-hours-long pantomime of absurd algorithms trivia and “tell me a time when you said no” behavioral questions. COVID-induced visa processing delays pushed my start date forward in time many times. The high-stress interview process and years-spanning wait built up tremendous anticipation. In hindsight I can say I probably had somewhat unrealistic expectations when finally joining the company in late 2021.

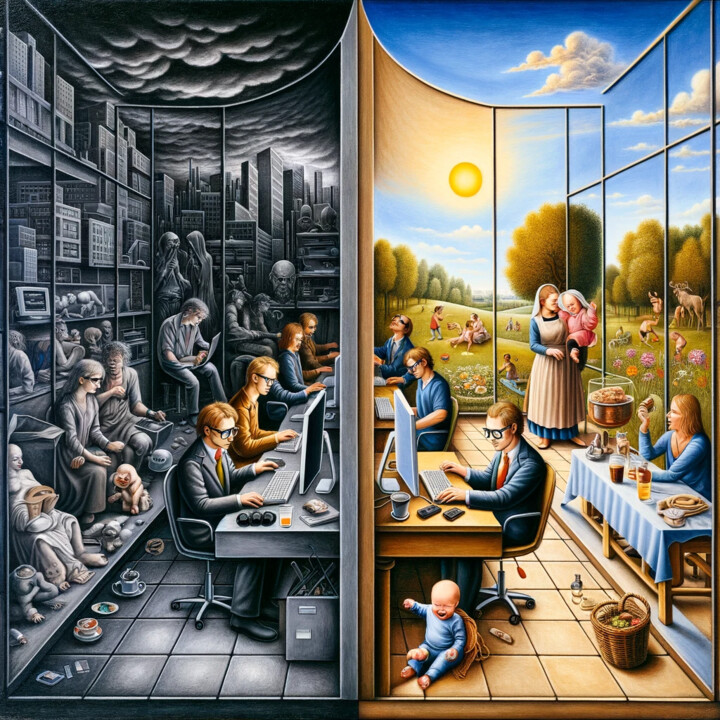

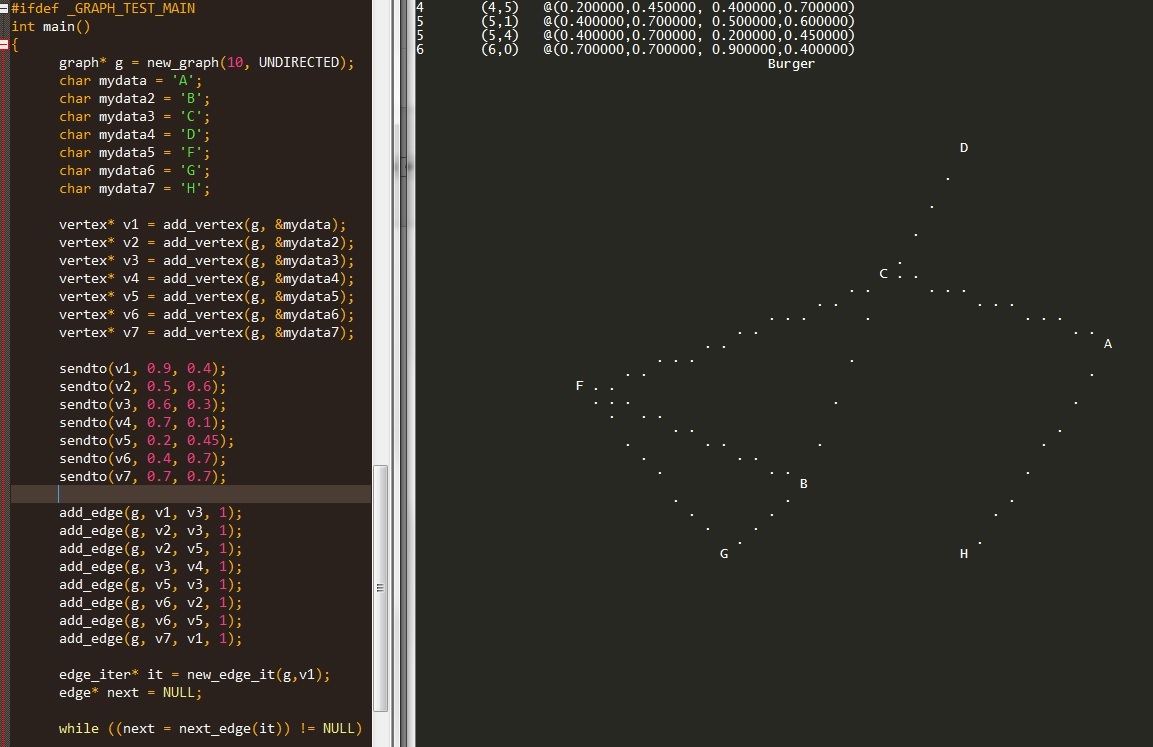

Regardless, I was quite frankly shocked after my first couple of weeks, and my first impression was that this kind of work was not for me. As a software engineer, I expected to eventually do some software engineering. I’m not sure how to describe the work that first team I joined was doing, but I can’t in good conscious call it software engineering.